More Data ≠ Better AI

In healthcare AI, scale is often treated as a proxy for progress. Larger datasets, more whole-slide images, and broader patient cohorts are assumed to naturally translate into better-performing models. While data volume is undeniably important, it is not sufficient.

Without harmonization, increasing dataset size can introduce uncontrolled heterogeneity. Models trained on such data frequently learn spurious correlations, rather than clinically meaningful patterns.

This leads to a well-known failure mode: strong internal validation performance paired with poor external generalization. In this context, adding more unstructured data does not improve models, instead it amplifies noise and bias.

Where Variability Comes From

Oncology and computational pathology data are inherently heterogeneous, with variability emerging across multiple system layers.

Pre-analytical variability arises from tissue handling, fixation protocols, and staining procedures. Analytical variability is introduced by scanner hardware, resolution differences, compression settings and artifacts. Annotation variability reflects inter-observer disagreement, evolving clinical definitions, and inconsistent labeling schemas.

Additionally, population variability (e.g., demographics, disease prevalence) and workflow variability (e.g., reporting standards, data capture systems) further fragment datasets.

These sources of variation are not isolated, they interact. Without harmonization, datasets become a mixture of incompatible distributions that obscure underlying biological signal.

Harmonization as a Prerequisite for Generalization

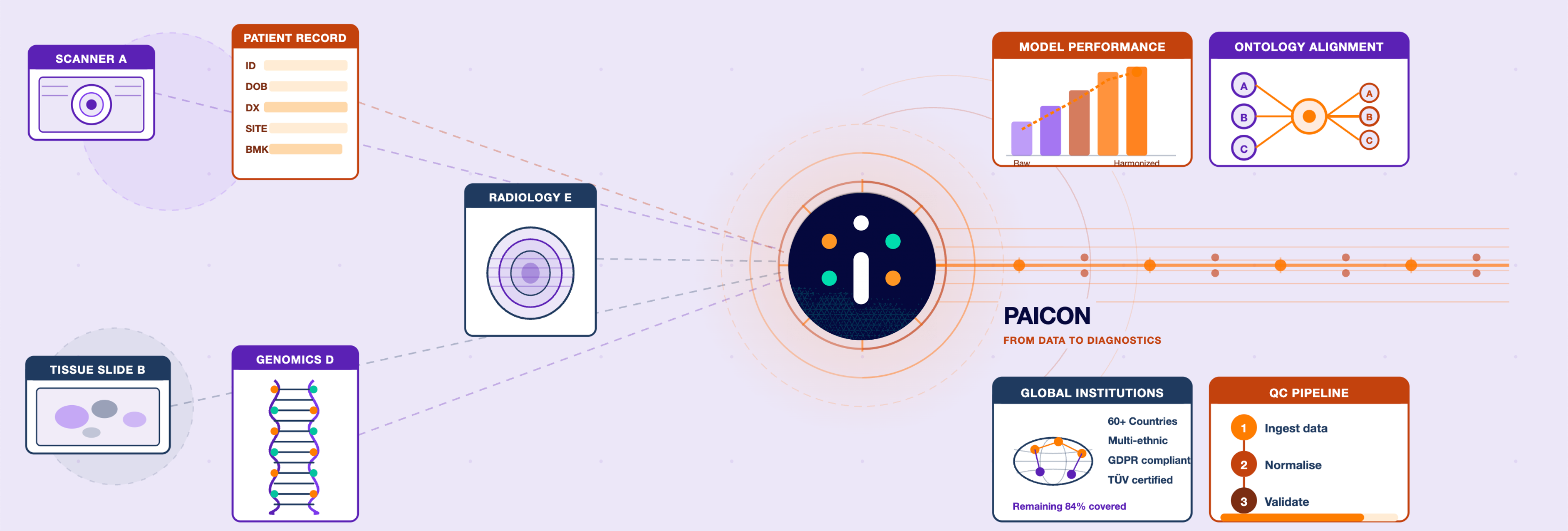

Harmonization aligns data across institutions, technologies, and workflows, enabling models to focus on clinically relevant features.

This operates at multiple levels: technical (e.g., color normalization, resolution standardization), semantic (e.g., ontology alignment), process (e.g., standardized acquisition protocols), and statistical (e.g., bias correction and balancing).

Rather than eliminating variability, harmonization structures it. This makes variability explicit, interpretable, and learnable, allowing models to generalize across real-world settings.

Evidence: Diversity and Balance Outperform Raw Volume

Recent work on our medical foundation models demonstrates that data quality, diversity, and balance can outweigh sheer dataset size. We showed that foundation models trained on a smaller but well-balanced and diverse dataset achieve competitive performance compared to models trained on larger, unstructured data collections.

The key insight is that structured diversity enables efficient representation learning. When datasets are harmonized and balanced across domains, models require fewer samples to capture the underlying data manifold.

This challenges the prevailing assumption that performance scales monotonically with data volume.

Reproducibility and Clinical Trust

Reproducibility is a fundamental requirement for clinical AI. Models must behave consistently across sites, populations, and time.

Unharmonized data introduces hidden dependencies on data source characteristics, making model behavior unstable and difficult to interpret. This directly impacts regulatory approval and clinician trust.

Harmonized data pipelines enable traceability, clear lineage of how data was acquired, processed, and annotated. This transparency is essential for validation, auditing, and post-deployment monitoring.

Harmonization Enables Meaningful Diversity and Equity

A common misconception is that harmonization reduces diversity. In reality, it enables meaningful diversity.

Unharmonized datasets often over-represent specific institutions or populations, leading to biased models. Harmonization allows heterogeneous data sources to be integrated while preserving their structure.

This enables explicit evaluation across subgroups and supports equitable AI systems that perform consistently across demographics, geographies, and clinical contexts. Diversity without harmonization introduces bias; harmonization turns diversity into a measurable and controllable strength.

Why Harmonization Must Be Designed Into Data Systems

Harmonization cannot be retrofitted at scale. It must be embedded into the design of data systems from the beginning.

This includes standardized data models and ontologies, governance frameworks across institutions, automated quality control pipelines, and robust data versioning with lineage tracking.

Crucially, harmonization is not a one-time effort. It is a continuous process that evolves alongside clinical practice and technology.

Conclusion

In healthcare AI, data volume alone does not create value. Without harmonization, scale leads to fragmentation, bias, and reduced generalizability.

Harmonization transforms data into a structured, interoperable asset. It enables generalization, supports reproducibility, and ensures equitable performance.

In healthcare AI, success will depend less on how much data you have and more on how well that data is standardized, integrated, and translated into reliable model performance.