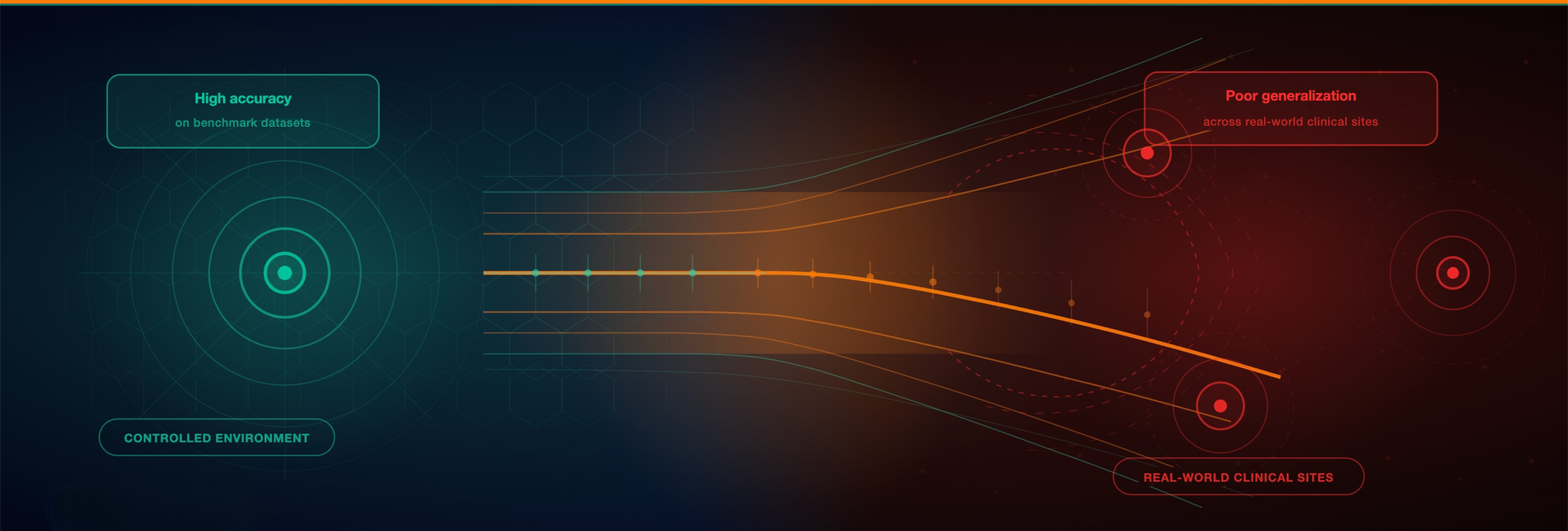

Artificial intelligence in oncology continues to report impressive performance metrics. Many digital pathology AI models achieve AUC values above 0.90 in internal validation. Yet a critical issue persists across the field:

Cancer AI often fails to generalize across institutions.

As AI systems move toward clinical deployment, the central challenge is no longer model architecture. It is external validation and cross-site robustness. The growing generalization gap between research performance and real-world performance is emerging as the defining bottleneck in medical AI.

The Generalization Gap in Digital Pathology AI

Internal validation does not equal clinical reliability.

A systematic review of artificial intelligence in digital pathology highlighted major heterogeneity in study design and incomplete reporting of pre-analytical variables such as fixation and staining protocols, which are factors directly affecting AI performance [1]. Without structured technical metadata, reproducibility across sites becomes fragile.

Research on domain shift in histopathology demonstrates that deep learning models are highly sensitive to variations in scanners, laboratories, and staining workflows [2]. When applied to unseen institutions, performance often declines.

Even more concerning, recent evidence suggests that pathology foundation models can detect scanner-specific signals, meaning models may learn acquisition artifacts rather than tumor biology [3]. This creates strong internal results but weak external validation. In short, high AUC inside a development cohort does not guarantee generalizable medical AI.

Why External Validation Fails

Three structural factors consistently drive the generalization gap in cancer AI:

1. Domain Shift Across Institutions

Variability in scanner hardware, slide preparation, staining protocols, and digitization pipelines introduces systematic distribution shifts. Without harmonization, AI models overfit to local data patterns [2].

2. Shortcut Learning and Hidden Confounders

AI systems can exploit technical artifacts correlated with labels. When acquisition characteristics align with diagnostic categories, models appear accurate internally but fail in new environments [3].

3. Data Leakage and Inflated Performance

Improper dataset separation and methodological pitfalls can artificially inflate performance metrics. Data leakage remains a widespread issue in biological machine learning and directly undermines external validity [4].

These factors collectively explain why many AI-enabled medical devices show strong retrospective metrics yet struggle in multi-center deployment.

What Robust External Validation Requires

If cancer AI is to scale safely and sustainably, external validation must become infrastructure, not an afterthought.

Best practice for external validation in oncology AI includes:

- Independent multi-center test datasets

- Explicit reporting of scanner, stain, and preparation metadata

- Cross-site performance stratification

- Ongoing post-deployment monitoring for model drift

Emerging work on validation infrastructure in medical imaging emphasizes that clinical AI readiness depends on systematic evaluation pipelines, not single retrospective studies [5].

External validation is not merely statistical confirmation. It is a reflection of data governance maturity and metadata traceability.

Why This Matters for Pharma, Hospitals, and Regulators

For pharmaceutical companies, weak generalization limits AI-driven biomarker discovery and patient stratification across trial sites.

For hospitals, inconsistent cross-site performance undermines clinician trust in AI systems.

For regulators, insufficient documentation of dataset representativeness and data traceability creates compliance risk under evolving frameworks such as the EU AI Act.

In each case, the limiting factor is not model size or computational power. It is the robustness of the data ecosystem underlying the model.

From Model Innovation to Infrastructure Maturity

Cancer AI is entering its infrastructure phase.

The future of robust oncology AI deployment will depend on:

- Structured metadata

- Cross-institutional harmonization

- Transparent dataset traceability

- Lifecycle validation and monitoring

External validation is becoming the true benchmark of quality in cancer AI.

The next generation of digital pathology AI will not be defined by who reports the highest internal AUC. It will be defined by who demonstrates reliable performance across institutions, populations, and clinical workflows.

Build on Infrastructure That Scales

Access harmonized, metadata-structured multinational cancer datasets enabling robust external validation, benchmarking, and regulatory-grade AI deployment across real-world clinical environments.

References

-

McGenity C, Clarke EL, Jennings C, et al. Artificial intelligence in digital pathology: a systematic review and meta-analysis of diagnostic test accuracy. npj Digit Med. 2024;7:114.

-

Stacke K, Eilertsen G, Unger J, Lundström C. A closer look at domain shift for deep learning in histopathology. arXiv preprint. 2019;arXiv:1909.11575.

-

Carloni G, Brattoli B, Keum S, etc. Pathology foundation models are scanner sensitive: Benchmark and Mitigation with Contrastive ScanGen Loss. arXiv. 2025.

-

Bernett J, Blumenthal DB, Grimm DG, et al. Guiding questions to avoid data leakage in biological machine learning applications. Nat Methods. 2024;21(8):1444–1453.

-

Ramwala O, Lowry KP, Cross NM, et al. Establishing a validation infrastructure for imaging-based AI algorithms before clinical implementation. J Am Coll Radiol. 2024 Oct;21(10):1569-1574. doi: 10.1016/j.jacr.2024.04.027.