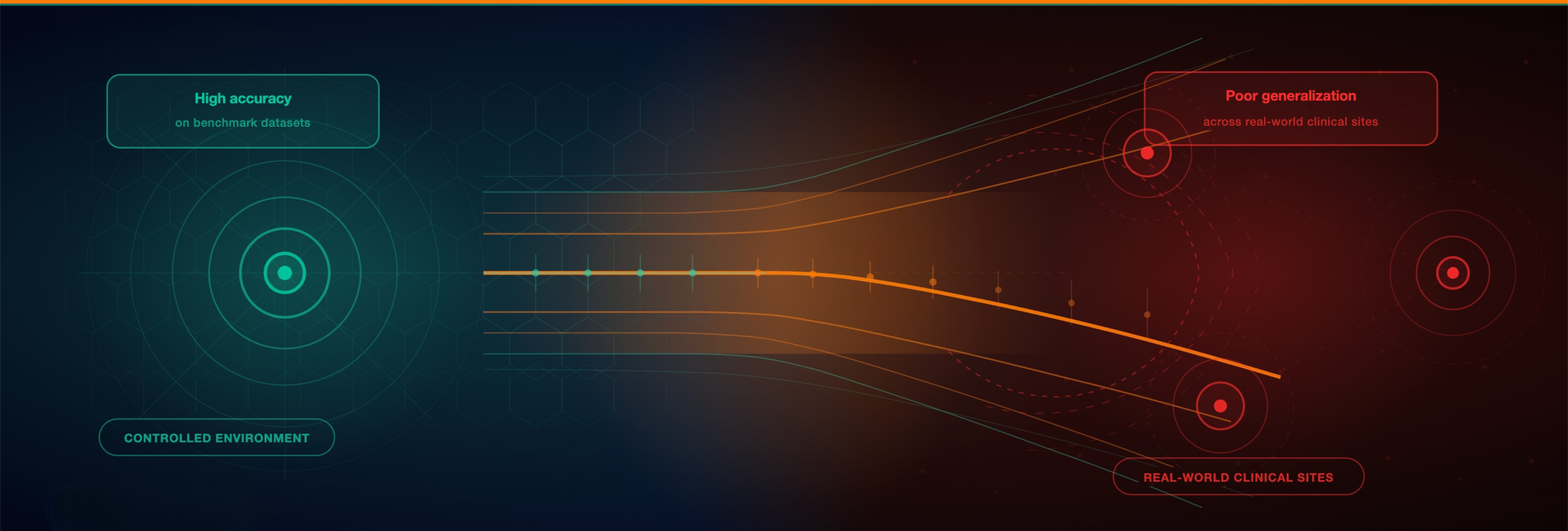

Artificial intelligence is rapidly advancing in healthcare, with models achieving impressive performance metrics across a range of diagnostic tasks. In computational pathology in particular, deep learning models have demonstrated strong capabilities in predicting molecular features, classifying tumors, and supporting clinical decision making. However, a persistent and critical challenge remains. Many AI systems that perform well in controlled research environments fail to deliver the same reliability when deployed in real world clinical settings.

This gap between model accuracy and real-world performance is not a marginal issue. It represents one of the most critical barriers to the clinical adoption of AI. While models often achieve high scores on internal validation datasets, their performance can degrade significantly when applied across different hospitals, populations, or technical conditions. In high-stakes environments such as oncology, this lack of robustness is not just a technical limitation. It directly affects clinical trust, regulatory approval, and ultimately patient outcomes.

The Generalization Challenge in Healthcare AI

At the core of this problem lies a fundamental characteristic of healthcare data: variability. Clinical data, and especially histopathology images, are inherently heterogeneous. Differences in staining protocols, scanner hardware, image resolution, and clinical workflows introduce variations that are often imperceptible to human observers but highly influential for machine learning models.

Research has shown that AI systems can unintentionally learn site-specific or technical artifacts instead of clinically meaningful signals. For example, Zech et al. demonstrated that a model trained to detect pneumonia relied on hospital-specific markers rather than pathology, resulting in poor generalization across institutions [1]. Similar observations have been made in computational pathology, where models may capture center-specific features instead of underlying biological patterns.

This phenomenon reflects a broader issue known as distribution shift. As highlighted by Kelly et al. and Willemink et al., even subtle differences in how data is generated or collected can significantly impact model performance [2, 3]. In practice, this means that an AI system trained in one environment cannot be assumed to perform reliably in another.

Why High Accuracy Does Not Translate to Clinical Reliability

A common misconception in medical AI is that high accuracy on benchmark datasets equates to real world readiness. In reality, many models are optimized for performance within narrowly defined datasets that do not reflect the complexity of clinical environments.

When models are evaluated on data that closely resembles their training distribution, they can achieve impressive results. However, when exposed to new populations, different scanners, or alternative clinical workflows, their performance may decline. This discrepancy highlights a critical limitation: accuracy alone is not a sufficient measure of clinical utility.

Studies such as Campanella et al. have demonstrated that models trained on large datasets may still fail to generalize when evaluated on external cohorts [4]. This suggests that the challenge is not simply the availability of data, but how well that data represents real world variability.

Recent research in computational pathology further supports this shift in perspective, showing that models trained on diverse, multi-institutional datasets can achieve strong performance even with fewer training samples. These findings indicate that exposure to variability during training is essential for building robust systems.

From Performance Metrics to Real-World Robustness

Addressing the generalization gap requires a shift in how AI systems are developed and evaluated. Instead of focusing solely on benchmark performance, greater emphasis must be placed on robustness across different clinical settings.

This includes ensuring that models are exposed to variability during training, evaluated on external datasets, and designed to prioritize clinically meaningful features over dataset-specific patterns. It also requires a broader perspective on validation, one that reflects the conditions under which models will ultimately be used.

For healthcare AI to move from research to routine clinical practice, reliability must become the primary objective. This means building systems that perform consistently across institutions, populations, and technologies, not just under ideal conditions.

At PAICON, this perspective is reflected in the development of AI solutions that prioritize real world applicability, leveraging multi-institutional data and structured data approaches to support robust model performance across diverse clinical environments.

Evidence in Practice

What does it actually take to build AI models that generalize across real world clinical settings?

In our latest publication, we show that data diversity across institutions, populations, and technologies enables strong performance even with significantly fewer training samples. The results challenge the assumption that larger datasets alone drive better models and provide concrete evidence for a more robust and efficient approach to computational pathology [5].

Read the full publication:

Diversity over scale: Whole slide image variety enables H&E foundation model training with fewer patches

Access Diverse, Real-World Data

Improving generalization in healthcare AI starts with the right data.

If you are developing AI models and encountering limitations in real world performance, access to diverse, multi-institutional data can be a critical factor. We collaborate with partners to provide structured datasets that reflect real clinical variability and support robust model development.

Reach out to explore data access and collaboration opportunities.

References

-

Zech JR, Badgeley MA, Liu M, Costa AB, Titano JJ, Oermann EK. Variable generalization performance of a deep learning model to detect pneumonia in chest radiographs: a cross sectional study. PLoS Med. 2018;15(11):e1002683.

-

Kelly CJ, Karthikesalingam A, Suleyman M, Corrado G, King D. Key challenges for delivering clinical impact with artificial intelligence. BMC Med. 2019;17(1):195.

-

Willemink MJ, Koszek WA, Hardell C, Wu J, Fleischmann D, Harvey H, et al. Preparing medical imaging data for machine learning. Radiology. 2020;295(1):4–15.

-

Campanella G, Hanna MG, Geneslaw L, Miraflor A, Silva VWK, Busam KJ, et al. Clinical-grade computational pathology using weakly supervised deep learning on whole slide images. Nat Med. 2019;25(8):1301–1309.

-

Bosch C, Wong JKL, Paulikat M, Zapukhlyak M, Arora B, Aichmüller-Ratnaparkhe M, et al. Diversity over scale: Whole-slide image variety enables H&E foundation model training with fewer patches. J Pathol Inform. 2026;21:100648.