There are more than 7,000 rare diseases. Around 300 million people live with one. Approximately 95% of those conditions have no approved treatment [1]. That gap is not only a scientific problem. It is, at its root, a data problem, and one that has gone largely unexamined in a field increasingly reliant on AI to accelerate discovery.

The Data Was Never There to Begin With

Rare diseases present a structural data challenge that has no easy parallel in common medicine. Patient populations are small, geographically dispersed, and clinically heterogeneous. The same condition may present differently depending on ancestry, environment, and access to care. Data on natural history, which is essential for designing meaningful trial endpoints and realistic comparators, is incomplete for the majority of rare conditions [2]. Biomarker prevalence varies across geographies in ways that datasets concentrated in a handful of academic medical centres simply cannot capture.

The result is a field where the foundational resource that modern research depends on, which is well-documented, representative patient data, is missing by default. Not because the patients do not exist, but because the infrastructure to find them, connect their data, and document it consistently has never been built at the right scale.

A 2025 systematic review of 390 published clinical AI studies found that 84% of models do not report the demographic composition of their training data at all [3]. For rare disease research, this creates a compounding problem: not only is the data scarce, but what exists is often undocumented, making it impossible to assess whether a dataset is relevant, representative, or usable for a specific indication or patient population.

When AI Enters a Data-Scarce Field

AI has reshaped drug discovery for common conditions. Biomarker identification, patient stratification, compound screening, trial optimisation, all of these have been accelerated by models trained on large, well-curated datasets. In rare disease, those same tools run into the wall that the data problem built.

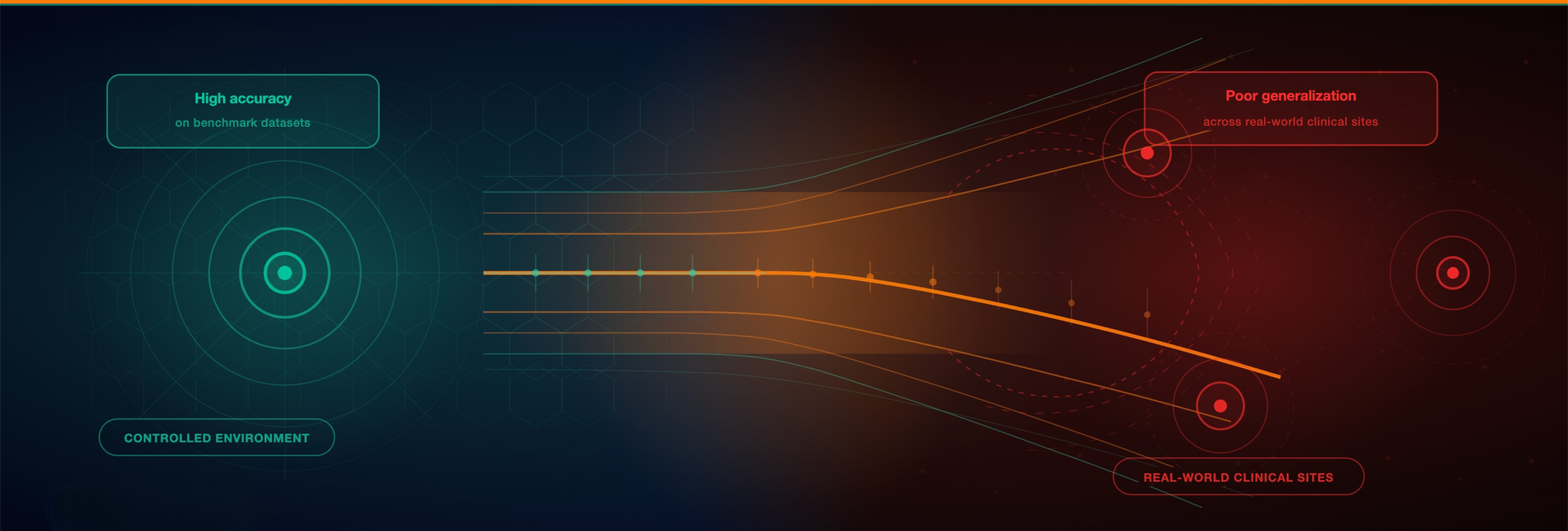

Models require substantial, well-annotated data to train effectively. When a model has never seen enough examples of a condition, it cannot reliably identify biomarkers, predict disease progression, or stratify patients [4]. Critically, it does not flag its uncertainty. It produces outputs that look authoritative and fail quietly in ways that aggregate performance metrics will not reveal.

This is the Remaining84 problem in its sharpest form. The underrepresentation that affects AI across all of medicine becomes acute in rare disease, where the relevant population may number in the thousands globally, scattered across dozens of countries, and rarely concentrated in the settings where most training data originates. The model does not fail because the algorithm is wrong. It fails because the patients were never in the data to begin with.

„AI that learns from everyone, can diagnose everyone.”

What the Field Needs to Change

The rare disease data problem is an extreme case of a broader failure in how medical AI has been built. Training data has been collected where it was easiest to collect, documented where documentation was required, and assessed for representation only when someone thought to ask. The result is a field where models are routinely built on foundations whose limits are unknown, and where the patients most in need of better diagnostics and faster drug development are the least likely to be in the data.

Closing that gap requires a different starting point. Not correcting for bias after a dataset has been assembled, but treating representation as a precondition for building on it at all. That means knowing the geographic spread of a cohort before using it, understanding the demographic composition of a training set before training on it, and having the provenance documentation to defend what a model learned and from whom.

This is the infrastructure gap the Remaining84 movement exists to address. Not as a campaign, but as an engineering premise: the data has to reflect the patients before the model can serve them.

References

-

Solazzo A, et al. The landscape for rare diseases in 2024. Lancet Glob Health. 2024;12(3):e365–e366. doi:10.1016/S2214-109X(24)00056-1

-

Griggs RC, et al. Data silos are undermining drug development and failing rare disease patients. J Clin Invest. 2021; PMC8025897.

-

Sobowale OA, et al. Gender and racial bias unveiled: clinical AI and ML algorithms are fanning the flames of inequity. Oxford Open Digital Health. 2025; oqaf027.

-

Zhu M, et al. Bridging data gaps of rare conditions in ICU: a multi-disease adaptation approach for clinical prediction. PMC12770491. 2025.